Managing app containers at scale (especially as part of CI/CD or a DevOps pipeline) is impossible without automation. Around 57% of companies have 2 to 8 containers per single app (31% operate in the 11 to 100 per-app range), so taking on dozens or hundreds of apps without container orchestration is not a viable long-term solution.

This article is an intro to container orchestration and the value of eliminating time-consuming tasks when managing containerized services and workloads. Read on to learn what this strategy offers and see how orchestration leads to more productive IT teams and improved bottom lines.

What is Container Orchestration?

Container orchestration is the strategy of using automation to manage the lifecycle of app containers. This approach automates time-consuming tasks like (re)creating, scaling, and upgrading containers, freeing teams from repetitive manual work. Orchestration also helps manage networking and storage capabilities.

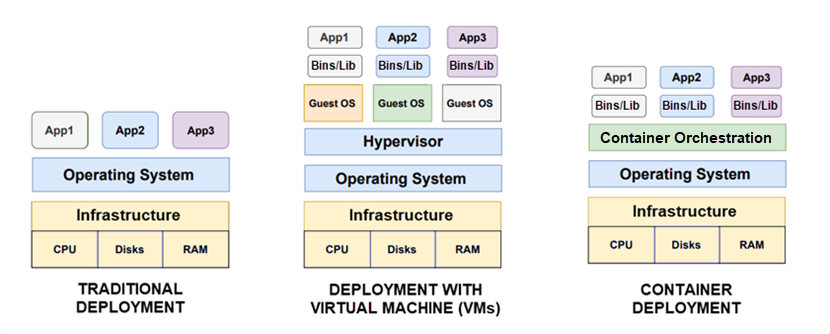

Containers are lightweight, executable app components that contain everything required for the code to run reliably in different IT environments. Teams break software into containers to make apps easier to run, move, update, and package. A single container has only:

- App source code.

- Operating system (OS) libraries.

- Dependencies required to run software (such as specific versions of programming language runtimes).

More portable and resource efficient than a virtual machine (VM), containers (or, more specifically, microservices) are the go-to compute strategy of modern software development and cloud-native architecture. However, there's a catch—the more containers there are, the more time and resources developers must spend to manage them.

Let's say you have 40 containers that require an upgrade. While performing a manual update is an option, it would take hours or even days of your time. That's where container orchestration comes in—instead of relying on manual work, you instruct a tool to perform all 40 upgrades via a single YAML file.

According to a recent survey, 70% of developers who work with containers report using a container orchestration platform. Also, 75% of these engineers state that they rely on a fully managed container orchestration service from a cloud provider. Unsurprisingly, the highest adoption rates for container orchestration are in DevOps teams.

Check out our comparison of containers and virtual machines (VMs) for a breakdown of all the differences between the two types of virtual environments.

What Is Container Orchestration Used For?

Container orchestration automates the many moving parts of containerized software. Capabilities differ between platforms, but most tools enable you to automate the following tasks:

- Container configuration and scheduling (when and how containers launch and stop, scheduling and coordinating activities of each component, etc.).

- Container provisioning and deployment.

- Scaling containers (both up and down) to balance workloads.

- Resource allocation and asset relocation (for example, if one of the containers starts consuming too much RAM on the node, the platform moves all other containers to another node).

- Load balancing and traffic routing.

- Cluster management.

- Service discovery (how microservices or apps locate each other on the network).

- Health monitoring, including failover and recovery processes (both for containers and hosts).

- Container availability and redundancy.

- Protection of container-to-container and container-to-host interactions.

- Container updates and upgrades.

Orchestration is not synonymous with automation—get an in-depth comparison of the two concepts in our orchestration vs. automation article.

How Does Container Orchestration Work?

Most orchestration platforms support a declarative configuration model that lets users define the desired outcome without providing step-by-step instructions. The developer writes a config file (typically in YAML or JSON) to describe the desired configuration, and the tool does its best to achieve the expected state. The role of this config file is to:

- Define which container images make up the app.

- Direct the platform towards the images' locations, such as Docker Hub.

- Provision the containers with necessary resources (such as storage).

- Define and secure the network connections between containers.

- Provide instructions on how to mount storage volumes and where to store logs.

Most teams branch and version control config files so engineers can deploy the same app across different development and testing environments before production.

Once you provide the config file, the orchestration tool automatically schedules container deployment. The platform chooses the optimal host based on available CPU, memory, or other conditions specified in the config file (e.g., according to metadata or the proximity of a certain host). Unless told otherwise, most tools also deploy replicas to ensure container redundancy.

Once the tool deploys a container, the platform manages the lifecycle of the containerized app by:

- Managing scalability, traffic routing, and resource allocation among the containers.

- Ensuring high levels of availability and performance of each container.

- Collecting and storing log data to monitor app health and performance.

- Attempting to fix issues and recover containers in case of failure (a.k.a., self-healing).

Container orchestration works in any environment that supports containers, from traditional dedicated servers to any type of cloud deployment.

Looking to keep cloud agility while benefiting from the raw power of physical hardware? Our Bare Metal Cloud (BMC) is a best-of-both-worlds offering that enables you to deploy and manage dedicated bare-metal servers with cloud-like speed and simplicity.

What About Multi-Cloud Container Orchestration?

Multi-cloud is a cloud computing strategy in which you rely on services from two or more different third-party providers. Multi-cloud container orchestration is the use of a tool to manage containers that move across multi-cloud environments instead of operating in a single infrastructure.

Setting up container orchestration across different providers is complex in some use cases, but the effort leads to multiple benefits, such as:

- Improved infrastructure performance.

- Opportunities for cloud cost optimization.

- Better container flexibility and portability.

- Less risk of vendor lock-in.

- More scalability options.

Containers and multi-cloud are an excellent fit. Multiple environments align with the containers' portable, "run anywhere" nature, while containerized apps unlock the full efficiency of relying on two or more cloud offerings.

Multi-cloud is a game-changer for many organizations looking to work with multiple cloud providers. Learn more about multi-cloud in these articles:

Check out our article on the differences between Unikernels and Containers.

Container Orchestration Benefits

Here are the main benefits of container orchestration:

- Quicker operations due to automation: Container orchestration saves incredible amounts of time by automating tasks such as provisioning containers, load balancing, setting up configurations, scheduling, and optimizing networks.

- Simplified management: By their nature, containers introduce a large amount of complexity to day-to-day operations. A single app might have hundreds or thousands of containers, so things quickly get out of control without an orchestration platform.

- Increased employee productivity: Around 74% of companies report that their teams are more productive when they do not work on repetitive tasks. Teams release new features faster, which leads to quicker times-to-market (TTM) and more room for high-ROI innovation.

- Resource and cost savings: Container orchestration maintains optimal usage of processing and memory resources, removing unnecessary overhead expenses. Companies also save money by requiring fewer staff members to build and manage systems.

- Extra security: Container orchestration drastically reduces the chance of human error, the leading cause of successful cyber attacks. Over 73% of companies report improvements in terms of security after adopting orchestration. The strategy also isolates app processes and grants more visibility, which lowers the number of attack vectors and surfaces.

- Better app quality: Almost 78% of teams report improved app quality due to container orchestration. Also, 73% of companies state that they improved user satisfaction due to better-performing apps.

- Better employee retention rates: Container orchestration frees teams from mundane work and makes room for more interesting tasks, which boosts happiness and your ability to keep talent from leaving the company.

- Higher service uptime: A container orchestration platform detects and fixes issues like infrastructure failures with more speed than any human. Over 72% of companies report significantly lowering service downtime following orchestration adoption.

Container orchestration is one of the prerequisites of efficient CI/CD. Learn about CI/CD pipelines and see why this workflow is becoming the go-to strategy for shipping software.

Top Container Orchestration Tools

To start using container orchestration, you're going to need a tool. Let's look at the five most popular options currently on the market.

Check out our article on container orchestration tools for a wider selection of platforms and an in-depth analysis of each tool's pros and cons.

Kubernetes

Kubernetes (K8s or Kube) is an open-source container orchestration tool for containerized workloads and services. Google donated K8s to the Cloud Native Computing Foundation (CNCF) in 2015, after which the platform grew into the world's most popular container orchestration tool.

According to a 2021 CNCF study, the number of Kubernetes engineers grew by 67% last year, reaching an all-time high of 3.9 million. The popularity of K8s gave rise to various Kubernetes-as-a-Service offerings (all of which are a worthwhile option if you are looking to get into orchestration), including:

- Amazon Elastic Container Service (ECS).

- Azure Kubernetes Services (AKS).

- Google Kubernetes Engine (GKE).

- VMware Tanzu.

- Knative.

- Istio.

- Cloudify.

- Rancher.

Main reasons to use Kubernetes

- Industry-leading functionality (service discovery, storage orchestration, automated rollouts and rollbacks, self-healing, IPv4/IPv6 dual-stacking, etc.) and an ever-expanding selection of open-source support tools.

- Advanced auto-scaling features enable top-tier scaling (HPA for scaling out, VPA for scaling up, and Cluster Autoscaler for optimizing the number of nodes).

- The backing of a giant community that continuously adds new features.

Here's some further reading if you'd like to learn more about Kubernetes:

Docker Swarm

Docker Swarm is an open-source container orchestration platform known for its simple setups and general ease of use. Swarm is a native mode of Docker (a tool for containerization) that enables users to manage "Dockerized" apps.

Main reasons to use Docker Swarm

- Simple to set up and significantly easier to use than Kubernetes (there are also fewer features, making Swarm the right option for less complex use cases).

- A natural choice for Docker users looking for an easier and faster path to container deployments.

- A low learning curve makes Swarm ideal for less experienced teams and newcomers to container orchestration.

Read about the main differences between Kubernetes and Docker Swarm to evaluate which of the two tools is the better fit for your team.

Nomad

Developed by HashiCorp (the company behind Terraform, one of the best Infrastructure as Code tools on the market), Nomad is an orchestration tool for both containerized and non-containerized apps. You can use the platform as a stand-alone orchestrator or add it as a supplement for Kubernetes.

Main reasons to use Nomad

- A natural fit if you already use HashiCorp products like Terraform, Vault, or Consul.

- Tool occupies little space (Nomad runs as a single binary and works on all major OSes).

- A flexible and easy-to-use orchestrator that supports workloads beyond containers (legacy, virtual machines, Docker workloads, etc.).

Open Shift

Red Hat's OpenShift is a leading Platform as a Service (PaaS) for building, deploying, and managing container-based apps. The platform extends Kubernetes functionalities and is a popular choice for continuous integration workflows due to its built-in Jenkins pipeline.

Main reasons to use OpenShift:

- Various functionalities to manage clusters via UI and CLI.

- A highly optimized platform that's an excellent fit with hybrid cloud deployments.

- A wide selection of templates and pre-built images for building databases, frameworks, and services.

Apache Mesos (With Marathon)

Mesos is an open-source platform for cluster management that, when combined with its native framework Marathon, becomes an excellent (albeit a bit pricey) container orchestration tool.

While not as popular as Kubernetes or Docker Swarm, Mesos is the orchestration tool of choice for a few big-name companies, including Twitter, Yelp, Airbnb, Uber, and Paypal.

Main reasons to use Mesos

- Highly modular architecture that lets users easily scale up to 10,000+ nodes.

- A lightweight, cross-platform orchestration platform with inherent flexibility and scalability.

- Mesos' APIs support Java, C++, and Python, plus the platform runs on Linux, Windows, and OSX.

Kubernetes, Docker Swarm, and Apache Mesos went through the so-called "container orchestration war" during the early and mid-2010s. The race was on to determine which platform would become the industry standard for managing containers. K8s "won" on the 29th of November 2017 when AWS announced their Elastic Container Service for Kubernetes.

An Industry Standard for Containerized Apps

Containers have transformed the way we build and maintain software. Container orchestration has been at the heart of this evolution as it maximizes the benefits of microservices and drastically streamlines day-to-day operations. Managing containers effectively will continue to be a priority going forward, so expect orchestration to only become more prevalent in the world of containerized apps.

Next, learn how Kubernetes and Docker differ and how they are used together.