Introduction

Containers facilitate application deployment by increasing portability and consuming fewer system resources than traditional virtual machines. DevOps engineers use them to create workflows optimized for agile methodologies that promote frequent and incremental code changes.

Kubernetes and Docker are two popular container management platforms. While both tools deal with containers, their roles in the development, testing, and deployment of containerized apps are very different.

This article introduces you to the features and design of Kubernetes and Docker. It also explains how the tools differ and presents how they can work together.

What is Kubernetes?

Kubernetes is an open-source platform for container orchestration. It allows synchronized and resource-efficient automatized deployment, scaling, and management of multi-container applications, such as apps based on the microservices architecture.

Note: Kubernetes is a feature-rich tool. Our article on best Kubernetes practices outlines the best methods for creating stable and efficient clusters.

Kubernetes Features

As a container orchestrator, Kubernetes provides a comprehensive set of automation features in all stages of the software development life cycle. These features include:

- Container management. Kubernetes provides a way to create, run, and remove containers.

- App packaging and scheduling. The platform automates application packaging and ensures optimal resource scheduling.

- Services. Kubernetes services manage internal and external traffic to pods through IP addresses, ports, and DNS records.

- Load Balancing. Kubernetes load balancer is a service that routes traffic among cluster nodes and optimizes workload distribution.

- Storage orchestration. Kubernetes enables the automatic mounting of various storage types, such as local and network storage, public cloud storage, etc.

- Horizontal scaling. If Kubernetes cannot handle the workload, the cluster can be easily scaled up with additional nodes.

- Self-healing. If a pod containing an application or one of its components goes down, Kubernetes redeploys it automatically.

- Advanced logging, monitoring, and debugging tools. These tools help troubleshoot possible cluster issues.

Note: Bare Metal Cloud is cloud-native and optimized for running distributed Kubernetes clusters. Let automation do all the heavy lifting while you focus on developing and releasing great software.

Kubernetes Tools

Kubernetes has a rich ecosystem of tools designed to extend its functionality. These tools can be divided into several categories, according to the aspect of Kubernetes they improve upon.

- Cluster management tools introduce new ways to interact with a cluster. Some of them, like Portainer and Rancher, are extensive, feature-rich platforms for controlling multiple aspects of the cluster. Other tools help with specific tasks - for example, cert-manager assists in provisioning and managing of TSL certificates.

- CI/CD integration tools assist DevOps teams in integrating Kubernetes into their CI/CD pipeline. These tools include Flux, GitOps Kubernetes operator, and Drone, a container-native CD platform.

- Monitoring tools such as Prometheus and Grafana help visualize Kubernetes data.

- Logging and tracing engines allow aggregation and analysis of system and application logs. The most popular among these engines is the ELK stack.

- Other important tools for Kubernetes include Istio, a service mesh for service management, and Minikube, a local Kubernetes implementation helpful for development and testing.

Note: Learn about Kubernetes objects.

How Does Kubernetes Work?

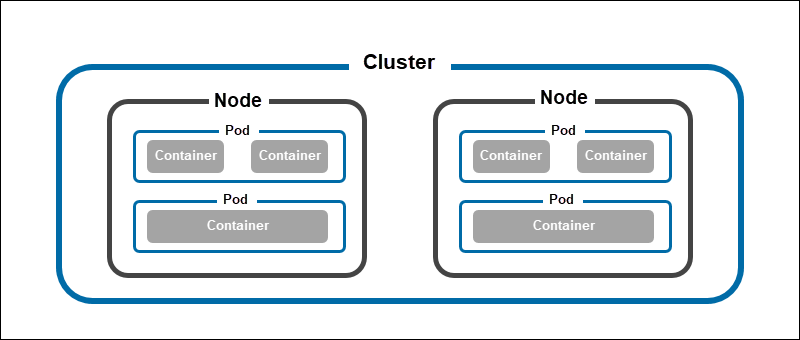

The entire Kubernetes architecture revolves around the concept of the cluster. A cluster is a set of nodes, physical or virtual machines that perform various functions. Each cluster consists of:

- The control plane running on a master node. There are usually multiple master nodes running in the cluster to avoid disruption and maintain high availability. However, only one is active at any time.

- Worker nodes, running containerized applications in pods, which are the smallest Kubernetes execution units.

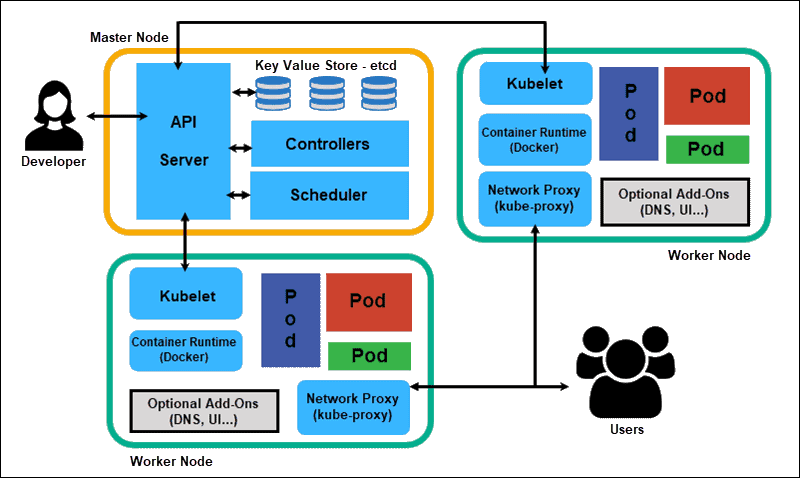

The control plane consists of multiple elements designed to maintain the cluster. These elements are:

- kube-apiserver, the front-end control plane component that exposes the Kubernetes API. The most important feature of kube-apiserver is that it scales horizontally. It means that multiple instances of the component can exist simultaneously, balancing traffic and providing performance improvements.

- etcd, the key-value store for cluster data.

- kube-controller-manager, the component that runs controller processes, such as the node controller for node monitoring, the job controller that manages Kubernetes jobs, token controllers, etc.

- kube Scheduler, the component that searches for the pods with no nodes assigned and provides them with nodes to run on.

Each node in Kubernetes consists of:

- Container runtime, the software that runs containers, such as containerd, CRI-O, and other Kubernetes CRI implementations.

- kubelet, the agent component that ensures containers are running and functioning properly.

- kube-proxy, the network proxy element monitoring and maintaining network rules on the node.

Kubernetes is a highly automated platform that needs only an initial input describing the cluster's desired state. Once the user provides the desired parameters, Kubernetes ensures they are permanently enforced in the cluster. The initial parameters can be issued using the command line or described in a manifest YAML file.

Note: Find out how Kubernetes compares to other container management platforms:

Kubernetes Use Cases

Kubernetes is used in various IT industry, business, and science scenarios. The following are some of the essential applications of Kubernetes:

- Large-scale app deployment

- Managing microservices

- CI/CD software development

- Serverless computing

- Hybrid and multi-cloud deployments

- Big data processing

- Computation

- Machine learning

For a detailed overview of the use cases listed above, refer to our Kubernetes Use Cases article.

Note: Kubernetes provides a straightforward way to configure and maintain a hybrid or multi-cloud ecosystem and achieve application portability. Learn more about this topic in Kubernetes for Multi-Cloud and Hybrid Cloud Portability.

What is Docker?

Docker is an open-source platform for the development, deployment, and management of containerized applications. Since containers are system-agnostic, Docker is a frequent choice for developing distributed applications.

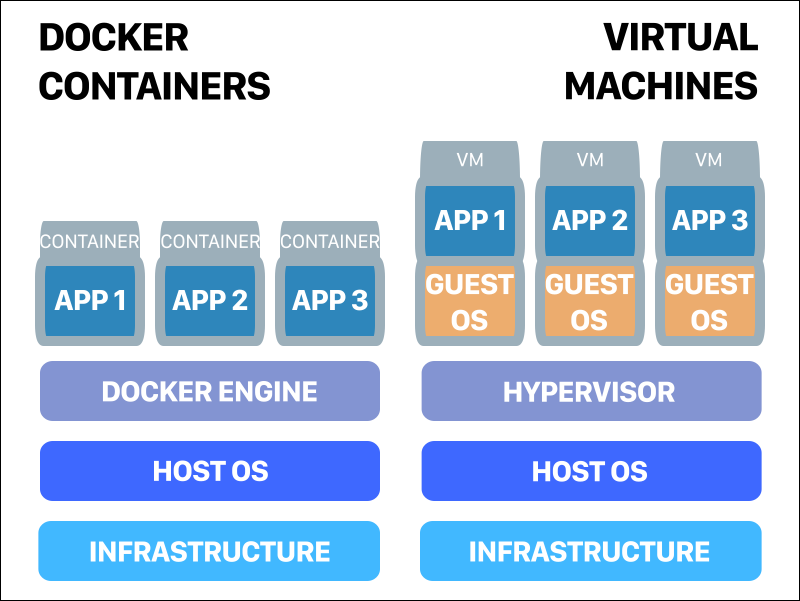

Using Docker containers for distributed apps helps developers avoid compatibility issues when designing applications to work on multiple OSs. The lightweight nature of the containers also makes them a better choice than traditional virtual machines.

Docker Features

Docker is a feature-rich tool that aims to be a complete solution for app containerization. Some of its essential characteristics are:

- App isolation. The Docker engine provides app isolation on a per-container level. This feature allows multiple applications to exist side-by-side on a server without the risk of possible conflicts. It also improves security by keeping the application isolated from the host.

- Scalability. Creating and removing containers is a simple process that allows scaling application resources.

- Consistency. Docker ensures that an app runs the same across multiple environments. Developers working on different machines and operating systems can work together on the same application without issues.

- Automation. The platform automates tedious, repetitive tasks and schedules jobs without manual intervention.

- Faster deployments. Since containers virtualize the OS, there is no boot time when starting up container instances. Therefore, deployments are complete in a matter of seconds. Additionally, existing containers can be reused when creating new applications.

- Easy configuration. Docker's command-line interface helps users configure their containers with simple and intuitive commands.

- Rollbacks and image version control. A container bases its contents on a Docker image with multiple layers representing changes and updates on the base. Not only does this feature speed up the build process, but it also provides version control over the container.

- Software-defined networking (SDN). Docker SDN support lets users define isolated container networks.

- Small footprint. The lightweight nature of containers makes Docker deployments resource-friendly. As containers do not include guest operating systems, they are much lighter and smaller than VMs. They use less memory and reuse components thanks to data volumes and images. Also, containers don't require large physical servers as they can run entirely on the cloud.

Docker Tools

Docker containers are the de facto standard in the IT industry today. Therefore, it is not surprising that many tools are available to help users extend the functionality of the Docker platform.

- Configuration management and deployment automation tools, such as Ansible, Terraform, and Puppet, help developers save time by automating repetitive tasks, security solutions, and more.

- CI/CD tools assist in integrating Docker into the CI/CD pipeline. The most popular tool is Jenkins, an open-source platform for software build and delivery process automation.

- Management tools such as Portainer offer alternative ways of managing Docker containers.

- Networking platforms provide Docker users with secure network models, virtual networking, and other useful additional features. Calico and Flannel are among the best-known tools in this category.

- Schedulers, like Docker's own Docker Swarm, introduce container orchestration features to Docker workflows.

- Monitoring tools, such as Librato, Dynatrace, and Datadog, let users analyze metrics obtained from host and daemon logs, monitoring agents and other sources.

Note: Our Docker monitoring tools article contains a comprehensive list of the most useful tools in this category.

How Does Docker Work?

Docker employs a client-server approach to container management. Each Docker setup consists of two main parts:

- The Docker daemon (dockerd) is a persistent background process that listens for Docker API requests and performs the requested management operations, such as manipulating containers, images, volumes, and networks.

- The Docker client allows users to issue commands, which are then passed to the Docker daemon using Docker API. The client can be installed on the same machine as the daemon or on any number of additional machines.

Working with Docker usually begins with writing a script of instructions called a Dockerfile. The file gives Docker instructions on what commands to run and resources to use in a Docker image.

Docker images represent templates of an application at a specific point in time. The source code, dependencies, libraries, tools, and other files required for the application to run are packaged into the image.

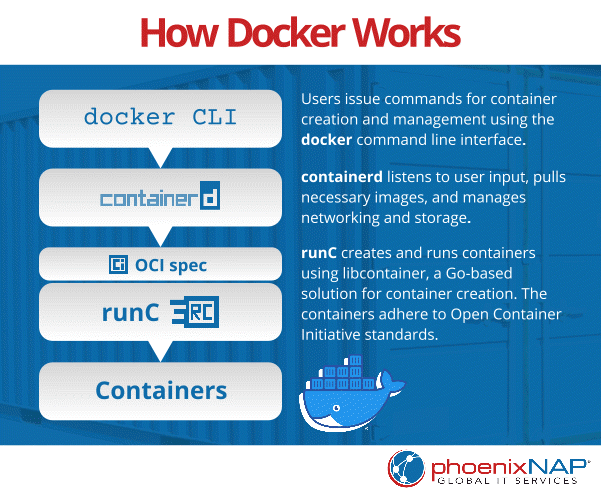

The process of container creation has three steps:

1. Users create Docker containers by issuing a docker run command, part of the Docker command-line interface.

2. The input is then passed to containerd, the daemon that pulls the necessary images.

3. containerd forwards the data to runC, a container runtime that creates the container.

Once Docker spins up a container from the specified Docker image, the container becomes a stable environment for developing and testing software. Containers are portable, compact, isolated runtime environments that can be easily created, modified, and deleted.

Docker Use Cases

Docker is used as a practical tool for packaging applications into lightweight and portable containers. Since a container consists of all the needed libraries and dependencies for a particular application, developers can easily pack, transfer, and run new app instances anywhere they like.

Additionally, Docker and other virtualization solutions are crucial in DevOps, allowing developers to test and deploy code faster and more efficiently. Utilizing containers simplifies DevOps by enabling continuous delivery of software to production.

Containers are isolated environments meaning developers can set up an app and ensure it runs as programmed regardless of its host and underlying hardware. This property is especially useful when working on different servers as it allows you to test new features and ensure environment stability.

Differences Between Docker and Kubernetes

As previously stated in the article, Docker and Kubernetes both deal with containers, but their purpose in this ecosystem is very different.

Docker is a container runtime that helps create and manage containers on a single system. While tools such as Docker Swarm allow orchestration of Docker containers across multiple systems, this feature is not a part of core Docker.

Kubernetes manages a cluster of nodes, each running a compatible container runtime. It means that Kubernetes is one level above Docker in the container ecosystem because it manages container runtime instances, including Docker.

Using Docker and Kubernetes Together

When used side-by-side, Docker and Kubernetes provide an efficient way to develop and run applications. Since Kubernetes was designed with Docker in mind, they work together seamlessly and complement each other.

Ultimately, you can pack and ship applications inside containers with Docker and deploy and scale them with Kubernetes. Both technologies help you run more scalable, environment-agnostic, and robust applications.

Conclusion

After reading this comparison article, you should understand the fundamental difference between Kubernetes and Docker. The article also highlighted how Kubernetes and Docker help each other in the application development process.