Introduction

Modern websites and applications generate lots of traffic and serve numerous client requests simultaneously. Load balancing helps meet these requests and keeps the website and application response fast and reliable.

In this article, you will learn what load balancing is, how it works, and which different types of load balancing exist.

Load Balancing Definition

Load balancing distributes high network traffic across multiple servers, allowing organizations to scale horizontally to meet high-traffic workloads. Load balancing routes client requests to available servers to spread the workload evenly and improve application responsiveness, thus increasing website availability.

Load balancing applies to layers 4-7 in the seven-layer Open System Interconnection (OSI) model. Its capabilities are:

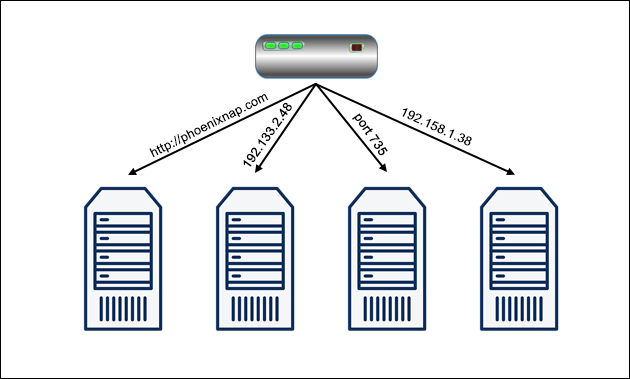

- L4. Directing traffic based on network data and transport layer protocols, e.g., IP address and TCP port.

- L7. Adds content switching to load balancing, allowing routing decisions depending on characteristics such as HTTP header, uniform resource identifier, SSL session ID, and HTML form data.

- GSLB. Global Server Load Balancing expands L4 and L7 capabilities to servers in different sites.

Note: Read our article and learn how to install NGINX on Ubuntu 20.04. NGINX is a free Linux application used for security and load balancing.

Why Is Load Balancing Important?

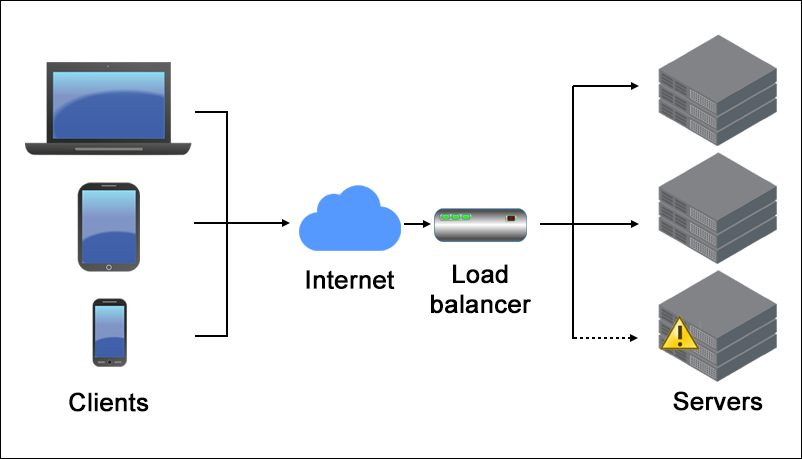

Load balancing is essential to maintain the information flow between the server and user devices used to access the website (e.g., computers, tablets, smartphones).

There are several load balancing benefits:

- Reliability. A website or app must provide a good UX even when traffic is high. Load balancers handle traffic spikes by moving data efficiently, optimizing application delivery resource usage, and preventing server overloads. That way, the website performance stays high, and users remain satisfied.

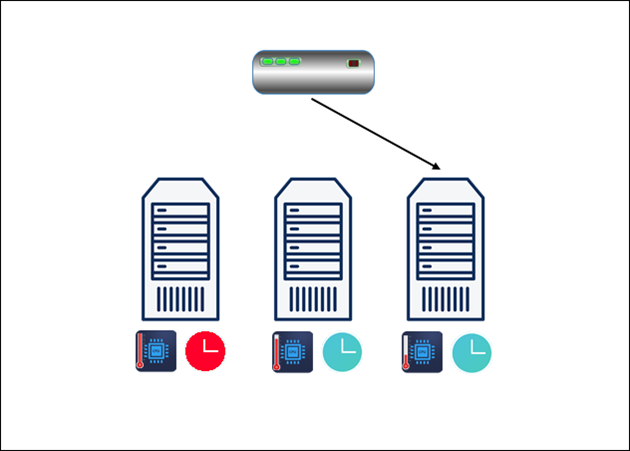

- Availability. Load balancing is important because it involves periodic health checks between the load balancer and the host machines to ensure they receive requests. If one of the host machines is down, the load balancer redirects the request to other available devices.

Load balancers also remove faulty servers from the pool until the issue is resolved. Some load balancers even create new virtualized application servers to meet an increased number of requests.

- Security. Load balancing is becoming a requirement in most modern applications, especially with the added security features as cloud computing evolves. The load balancer's off-loading function protects from DDoS attacks by shifting attack traffic to a public cloud provider instead of the corporate server.

Note: Another way to defend against DDoS attacks is to use mod_evasive on Apache.

- Predictive Insight. Load balancing includes analytics that can predict traffic bottlenecks and allow organizations to prevent them. The predictive insights boost automation and help organizations make decisions for the future.

How Does Load Balancing Work?

Load balancers sit between the application servers and the users on the internet. Once the load balancer receives a request, it determines which server in a pool is available and then routes the request to that server.

By routing the requests to available servers or servers with lower workloads, load balancing takes the pressure off stressed servers and ensures high availability and reliability.

Load balancers dynamically add or drop servers in case of high or low demand. That way, it provides flexibility in adjusting to demand.

Load balancing also provides failover in addition to boosting performance. The load balancer redirects the workload from a failed server to a backup one, mitigating the impact on end-users.

Note: Monitor the performance of your servers using one of these 17 best server monitoring tools.

Types of Load Balancing

Load balancers vary in storage type, balancer complexity, and functionality. The different types of load balancers are explained below.

Hardware-Based

A hardware-based load balancer is dedicated hardware with proprietary software installed. It can process large amounts of traffic from various application types.

Hardware-based load balancers contain in-built virtualization capabilities that allow multiple virtual load balancer instances on the same device.

Software-Based

A software-based load balancer runs on virtual machines or white box servers, usually incorporated into ADC (application delivery controllers). Virtual load balancing offers superior flexibility compared to the physical one.

Software-based load balancers run on common hypervisors, containers, or as Linux processes with negligible overhead on a bare metal server.

Virtual

A virtual load balancer deploys the proprietary load balancing software from a dedicated device on a virtual machine to combine the two above-mentioned types. However, virtual load balancers cannot overcome the architectural challenges of limited scalability and automation.

Cloud-Based

Cloud-based load balancing utilizes cloud infrastructure. Some examples of cloud-based load balancing are:

- Network Load Balancing. Network load balancing relies on layer 4 and takes advantage of network layer information to determine where to send network traffic. Network load balancing is the fastest load balancing solution, but it lacks in balancing the distribution of traffic across servers.

- HTTP(S) Load Balancing. HTTP(S) load balancing relies on layer 7. It is one of the most flexible load balancing types, allowing administrators to make traffic distribution decisions based on any information that comes with an HTTP address.

- Internal Load Balancing. Internal load balancing is almost identical to network load balancing, except it can balance distribution in internal infrastructure.

Load Balancing Algorithms

Different load balancing algorithms offer different benefits and complexity, depending on the use case. The most common load balancing algorithms are:

Round Robin

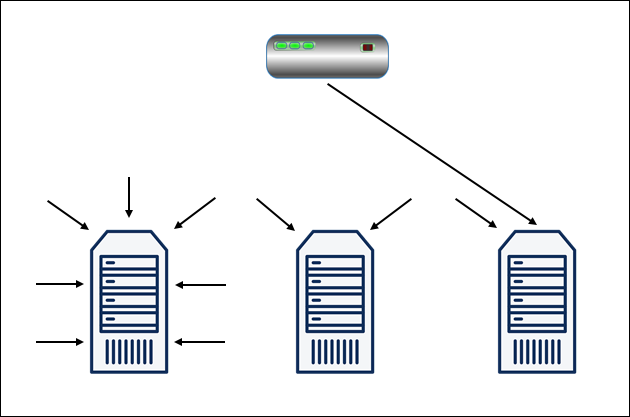

Distributes requests sequentially to the first available server and moves that server to the end of the queue upon completion. The Round Robin algorithm is used for pools of equal servers, but it doesn't consider the load already present on the server.

Least Connections

The Least Connections algorithm involves sending a new request to the least busy server. The least connection method is used when there are many unevenly distributed persistent connections in the server pool.

Least Response Time

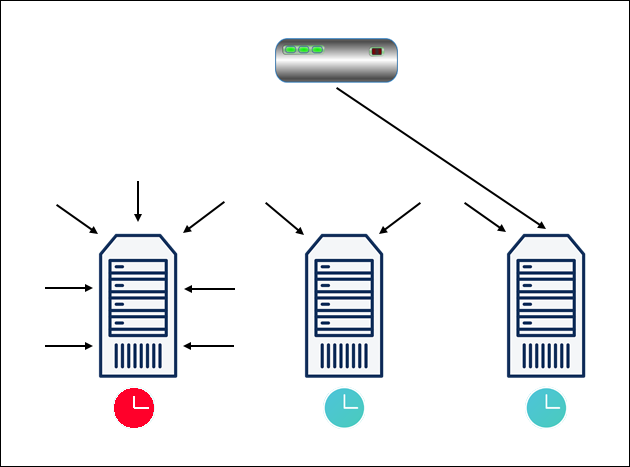

Least Response Time load balancing distributes requests to the server with the fewest active connections and with the fastest average response time to a health monitoring request. The response speed indicates how loaded the server is.

Hash

The Hash algorithm determines where to distribute requests based on a designated key, such as the client IP address, port number, or the request URL. The Hash method is used for applications that rely on user-specific stored information, for example, carts on e-commerce websites.

Custom Load

The Custom Load algorithm directs the requests to individual servers via SNMP (Simple Network Management Protocol). The administrator defines the server load for the load balancer to take into account when routing the query (e.g., CPU and memory usage, and response time).

Note: Learn how to handle website traffic surges by deploying WordPress on Kubernetes. This enables you to horizontally scale a website.

Conclusion

Now you know what load balancing is, how it enhances server performance and security and improves the user experience.

The different algorithms and load balancing types are suited for different situations and use cases, and you should be able to choose the right load balancer type for your use case.